This article is a preview from the Autumn 2016 edition of New Humanist. You can find out more and subscribe here.

In a 2008 article in Wired magazine entitled “The End of Theory”, Chris Anderson argued that the vast amounts of data now available to researchers made the traditional scientific process obsolete. No longer would they need to build models of the world and test them against sampled data. Instead, the complexities of huge and totalising datasets would be processed by immense computing clusters to produce truth itself: “With enough data, the numbers speak for themselves.” As an example, Anderson cited Google’s translation algorithms which, with no knowledge of the underlying structures of languages, were capable of inferring the relationship between them using extensive corpora of translated texts. He extended this approach to genomics, neurology and physics, where scientists are increasingly turning to massive computation to make sense of the volumes of information they have gathered about complex systems. In the age of big data, he argued, “Correlation is enough. We can stop looking for models.”

This belief in the power of data, of technology untrammelled by petty human worldviews, is the practical cousin of more metaphysical assertions. A belief in the unquestionability of data leads directly to a belief in the truth of data-derived assertions. And if data contains truth, then it will, without moral intervention, produce better outcomes. Speaking at Google’s private London Zeitgeist conference in 2013, Eric Schmidt, Google Chairman, asserted that “if they had had cellphones in Rwanda in 1994, the genocide would not have happened.” Schmidt’s claim was that technological visibility – the rendering of events and actions legible to everyone – would change the character of those actions. Not only is this statement historically inaccurate (there was plenty of evidence available of what was occurring during the genocide from UN officials, US satellite photographs and other sources), it’s also demonstrably untrue. Analysis of unrest in Kenya in 2007, when over 1,000 people were killed in ethnic conflicts, showed that mobile phones not only spread but accelerated the violence. But you don’t need to look to such extreme examples to see how a belief in technological determinism underlies much of our thinking and reasoning about the world.

“Big data” is not merely a business buzzword, but a way of seeing the world. Driven by technology, markets and politics, it has come to determine much of our thinking, but it is flawed and dangerous. It runs counter to our actual findings when we employ such technologies honestly and with the full understanding of their workings and capabilities. This over-reliance on data, which I call “quantified thinking”, has come to undermine our ability to reason meaningfully about the world, and its effects can be seen across multiple domains.

The assertion is hardly new. Writing in the Dialectic of Enlightenment in 1947, Theodor Adorno and Max Horkheimer decried “the present triumph of the factual mentality” – the predecessor to quantified thinking – and succinctly analysed the big data fallacy, set out by Anderson above. “It does not work by images or concepts, by the fortunate insights, but refers to method, the exploitation of others’ work, and capital … What men want to learn from nature is how to use it in order wholly to dominate it and other men. That is the only aim.” What is different in our own time is that we have built a world-spanning network of communication and computation to test this assertion. While it occasionally engenders entirely new forms of behaviour and interaction, the network most often shows to us with startling clarity the relationships and tendencies which have been latent or occluded until now. In the face of the increased standardisation of knowledge, it becomes harder and harder to argue against quantified thinking, because the advances of technology have been conjoined with the scientific method and social progress. But as I hope to show, technology ultimately reveals its limitations.

* * *

Moore’s Law provides a useful starting point to examine the way in which technological theory becomes social practice. Driven by markets, it has become dangerously entrenched in the idea of progress. Formulated by Gordon Moore in 1965, Moore’s Law predicts that the number of transistors in an integrated circuit or microchip doubles approximately every two years. While the exact figure has varied over the years, the theory has proved remarkably resilient, and the result has been a near-constant upward curve, powering the last 50 years of economic growth, and leading to a corresponding belief in the utility and inevitability of technological innovation. Moore’s Law has been used to explain productivity cycles, biotechnology advances, digital camera resolutions and a host of other entangled processes, and has become so engrained in scientific thinking that every succeeding technology, from batteries to solar power, is judged on a similar scale.

Implicit in Moore’s Law is not only the continued efficacy of technological solutions, but their predictability, and hence the growth of solutionism: the belief that all of mankind’s problems can be solved with the application of enough hardware, software and technical thinking. Solutionism rests on the belief that all complex activities are reducible to measurable variables. Yet this is slowly being revealed as a fallacy, as our technologies become capable of processing ever larger volumes of data. In short, our engineering is starting to catch up with our philosophy.

“Eroom’s law” – Moore’s law backwards – was recently formulated to describe a problem in pharmacology. Drug discovery has been getting more expensive. Since the 1950s the number of drugs approved for use in human patients per billion US dollars spent on research and development has halved every nine years. This problem has long perplexed researchers. According to the principles of technological growth, the trend should be in the opposite direction. In a 2012 paper in Nature entitled “Diagnosing the decline in pharmaceutical R&D efficiency” the authors propose and investigate several possible causes for this. They begin with social and physical influences, such as increased regulation, increased expectations and the exhaustion of easy targets (the “low hanging fruit” problem). Each of these are – with qualifications – disposed of, leaving open the question of the discovery process itself.

In the last 20 years drug discovery has experienced a major strategic shift, away from small teams of researchers intensively focused on small groups of molecules, and towards wide-spectrum, automated search for potential reactions within huge libraries of compounds. This process – known as high-throughput screening or HTS – is the industrialisation of drug discovery. Picture a cross between a modern car factory, all conveyor belts and robot arms, and a datacentre – rack upon rack of trays, fans and monitoring equipment – and you’re closer to the contemporary laboratory than older visions of (predominantly) men in white coats tinkering with bubbling glassware. HTS prioritises volume over depth. It strip-mines the chemical space, simultaneously testing thousands of molecules against one another. At the same time, it reveals the almost ungraspable extent of that space, and the impossibility of modelling all possible interactions. Drug discovery is faltering, suggest the paper’s authors, because the pharmaceutical industry believes that brute-force exploitation of data is superior to “the messy empiricism of older approaches”. The dominance of HTS fails to account both for the biases of its own sample sets (libraries of molecular samples assembled by other corporations, which frequently overlap) and the social conditions which produce automation itself. “Automation, systematisation and process measurement have worked in other industries,” write the authors. “Why let a team of chemists and biologists go on a trial and error-based search of indeterminable duration, when one could quickly and efficiently screen millions of leads against a genomics-derived target, and then simply repeat the same industrial process for the next target, and the next?”

It has taken 20 years of blind faith in data to realise the limitations of this approach, and for advocates to start to make the case for those messier empiricisms once again. The highly successful pharmaceutical researcher Sir James Black, best known for his work developing beta-blockers to treat heart disease, coined the word “obliquity” to describe this basic principle: “you are often most successful in achieving something when you are trying to do something else.” In light of this realisation, many drug companies are today seeking to combine HTS with broader, human-led exploration of chemical space. Such an approach is not surprising if you examine the ways in which brute-force discovery and human intelligence have been combined in another fiendishly challenging problem: chess.

* * *

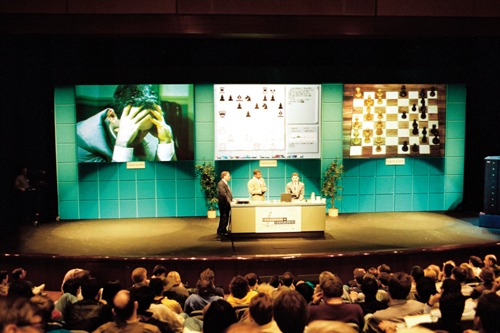

Since the 1950s, enthusiasts have been promoting the use of computers to automate the game of chess. In 1997, after many advances and setbacks, IBM’s Deep Blue finally bested the world’s greatest player, Garry Kasparov. Deep Blue’s approach was analogous to that of HTS: it crawls across the possibility space of all potential moves and determines the most profitable route to take. For many, the defeat of human ingenuity by the machine signalled an epochal change in the relationship between automated and natural thought. But something strange happened to chess after 1997. Instead of capitulating, its masters rethought their own thinking. The following year, Kasparov opened the first tournament of what has come to be known as Advanced Chess. In Advanced Chess, players are allowed to use a computer to assist them – and the results have been revelatory. Thanks to hardware and software improvements in the years since Deep Blue’s victory, even relatively weak computers now routinely beat Grandmasters at tournament level. But as Advanced Chess has shown, relatively weak computers working alongside human players will wipe the floor with the most advanced supercomputers. Collaborative rather than oppositional thinking has yielded radical advances in chess theory, and opened up whole new areas of play.

Both the machinic automation of high-throughput screening and the calculating processing of computer chess are examples of quantified thinking: the reduction of the field to data alone; that which can be quantified, and thus processed and appraised with the illusion of dispassion. But no process is dispassionate, or separate from psychological and social influence, and so quantified thinking inevitably fails. In computer chess and HTS we see examples of our technologies becoming so advanced that they reveal the weaknesses of our assumptions about them.

The collaborations that have resulted from the realisation of this weakness in chess and pharmacology would not be possible without the increased understanding of the way machine thinking functions, and our acknowledgement of its limitations in the face of intense social and commercial pressures. But to stop at these examples ignores both the violence that continues to be done in the name of quantified thinking, and the wider implications such thinking has for our relationship to the world. This violence is seen most clearly when quantified thinking is applied to domains of knowledge which, through their position as weapons of power, admit no such critique or appraisal.

In September 2010, a US airstrike in a remote region of Afghanistan killed ten people, including a man that an International Security Assistance Force press release called Muhammed Amin. This Amin was the Taliban “shadow governor” of Takhar province and a senior member of the Islamic Movement of Uzbekistan (IMU). But in the weeks that followed an increasing number of voices, from Afghan officials to Western reporters, claimed that the strike had hit a convoy of campaign workers in the national elections, and that “Amin” was in fact Zabet Amanullah, a former Taliban commander now working with the government. Amanullah had returned to his home province after nearly a decade to support the election and local reconstruction. Reporters even found the “real” Muhammed Amin living in Pakistan. But despite these claims, US commanders continued to state that Amanullah was Amin, and that secret intelligence confirmed “beyond all doubt” that they had hit the correct target.

Kate Clark, a former BBC Kabul correspondent, has conducted an extensive study of the Takhar killings, and her report for the Afghanistan Analysts Network gives us some insights into how the wrong person was selected by US intelligence – a process, and an outcome, which is far from unique. Based on information from a detainee in US custody, intelligence units had mapped out a network of mobile phones used by alleged Taliban and IMU members: a set of SIM cards which implicated their owners in extremist activity. It was alleged that one of these SIM cards received regular calls from Muhammed Amin. Some time later, it was passed to him, at which point he began to “self-identify” as Zabet Amanullah – to use that name as an alias. But this name was not an alias, and as biographies of the two men were to prove, their identities had been confused, with the misunderstanding exacerbated by US forces’ poor understanding of local politics. Clark writes: “When pressed about the existence – and death – of an actual Zabet Amanullah, they argued that they were not tracking a name, but targeting the telephones.”

This process is the same as that used to identify “a senior Al-Qaeda member” in a 2012 US National Security Agency document released by Edward Snowden. This top-secret document singled out Ahmad Muaffaq Zaidan, including his photograph and terror watch list identification number, based on intercepted phone calls. The surveillance records showed Zaidan’s phone number contacting numerous terrorist operatives, and travelling frequently between Pakistan, Afghanistan and the Middle East. This behaviour matched the profile of an Al Qaeda courier, one possibly working with Osama bin Laden himself. What the document fails to record is that Ahmad Muaffaq Zaidan is Al Jazeera’s Islamabad bureau chief. To the algorithmic imagination, the practice of journalism and the practice of terrorism appear to be functionally identical. In Turkey, Tayyip Erdoğan has called for a new law which would designate journalists as terrorists: “It is not only the person who pulls the trigger, but those who made that possible who should be defined as terrorists, regardless of their title.” In Britain, the Metropolitan Police justified the arrest of David Miranda, partner of the investigative journalist Glenn Greenwald, by describing the release of secret documents as “designed to influence a government” and “made for the purpose of promoting a political or ideological cause. This therefore falls within the definition of terrorism.” Quantified thinking dispenses with the verbiage and simply reproduces such politics in inscrutable code.

Zaidan’s misidentification was not fatal, but Amanullah’s was, and it was not an isolated event. Information encoded as data and divorced from other forms of understanding is not merely prejudicial but violent. In the words of former NSA director Michael Hayden: “we kill people based on metadata”. As Anita Ghodes, a researcher of conflict violence at the University of Mannheim, has shown, the manipulation of information is not merely propaganda, but a weapon in itself. Ghodes studied internet blackouts in the Syrian conflict to demonstrate how Bashar al-Assad’s government used its control of the internet not to prevent coverage of attacks, but to facilitate the attacks themselves: “cutting off all connections has the potential of constituting a tactic within larger military offensives.”

* * *

And so we see that the violence done in cases like these is threefold: to the bodies disintegrated by hellfire missiles or barrel bombs, to a society subjected to decisions whose operation is illegible, either through technological obscurity or governmental secrecy, and to our cultural conception of knowledge when it is produced in ways which we cannot understand or critique. This violence is visible through the lens of the very technologies that produce it, and it is a violence done to our ability to meaningfully engage with the world. Despite the views of someone like Hayden, it is possible for those who operate surveillance to see the destructive power of all-consuming quantification. William Binney, a former technical director of the NSA, told a British parliamentary committee in January 2016 that bulk collection of communications data was “99 per cent useless”. “This approach costs lives, and has cost lives in Britain because it inundates analysts with too much data.” This “approach” is the collect-it-all, brute-force process of quantified thinking.

When such thinking has become engrained in culture, its debased form spreads into all ways of seeing and perceiving the world. I would argue that the equivalent of quantitative thinking is to be found in conspiratorial and anti-scientific ways of thinking too – and, crucially, they are produced by the same reductionism. They are not a mirror to science, but an analogue, one based on the same core assumptions. The view that more information uncritically produces better decisions is visibly at odds with our contemporary situation.

In a study entitled Engineers of Jihad published in 2007 (and updated to book length this year), Oxford sociologists Diego Gamberra and Steffen Hertog analysed the biographies of 404 known members of violent Islamist groups, and compared them to both non-violent Islamist groups and non-Islamic extremists. They found that one educational background was disproportionately represented among Islamic radicals: engineering. They also found that this overrepresentation of engineers “occurs not only throughout the Islamic world but even among Western-based extremists” and “seems insensitive to country variations”. The authors attribute this finding to the interaction between “the special social difficulties faced by engineers in Islamic countries” and “engineers’ peculiar cognitive traits and dispositions”.

To explain those “peculiar cognitive traits and dispositions”, the authors cite a British intelligence dossier revealed by the Sunday Times in 2005. It noted that “[Islamist] extremists are known to target schools and colleges where young people may be very inquisitive but less challenging and more susceptible to extremist reasoning/arguments”. But are engineers more susceptible to extremist arguments in general?

Looking at the political affiliations of engineers in general, the authors discovered an overwhelming conservative bias: “The proportion of engineers who declare themselves to be on the right of the political spectrum is greater than in any other disciplinary group.” Violent jihadism and other forms of right-wing extremism share two characteristics identified by the sociologists Seymour Martin Lipset and Earl Raab: “monism” and “simplism”. Monism is described as “the tendency to treat cleavage and ambivalence as illegitimate […] the repression of difference and dissent, the closing down of the market place of ideas”, while simplism is the “unambiguous ascription of single causes and remedies for multifactored phenomena”.

This problem is best encapsulated in cybernetics, the study of control and communication in systems and, specifically, the way control and communication work together. Critical to cybernetics is a belief in the ability to map the entire system and thus to assert control over it. This has lead the German scholar Reinhard Schulze to suggest that radical Islam is a “cybernetic view of society”, in which a totalising worldview propels right-wing tendencies towards violence. But this trajectory also underlies all other cybernetic philosophies, including quantified thinking. The reduction of the problem space and the replacement of causation with correlation are, consciously or not, the mechanisms of data processing.

This counter-intuitive association of increased information with reductive doctrinal extremism should not be so surprising, and it is testament to our own cognitive biases that it remains so. And yet the evidence surrounds us, made visible by the very machinery we have built to enact it, from medical research to global surveillance, and from violent extremism to conspiracy theory. The results we claim from such frameworks are not models of the world itself, but models of complexity; their poor fit not a problem with the model but the experimental result itself. Instead of renouncing models, as Anderson pleaded, we must recognise we have produced a new one, of complex entanglement beyond the reach of systemic analysis.

* * *

ISIS has a term for the slippery, almost ungraspable terrain we now find ourselves in, although for extremists it is given as a term of abuse. This is “the grey zone”: a world of limited knowability and existential doubt, horrifying to the extremist and the empiricist alike. In this world we are forced to acknowledge the narrow extent of empirical reckoning and turn again to other forms of knowledge-making: more contingent, more entangled and more networked. The appearance of the grey zone, which has always been there, but unseen beyond the frontiers of our limited, un-networked domains of knowledge, is not a failure of comprehension but a recognition of a new kind of understanding. This understanding cannot be separated from our own knowledge-making practices. The world is not something we study neutrally, that we gather neutral knowledge about, on which we can act neutrally. Rather, we make the world by understanding it, and the way we understand it changes it. In the words of the physicist and poet Karen Barad, “Making knowledge is not simply about making facts but about making worlds, or rather, it is about making specific worldly configurations – not in the sense of making them up ex nihilo, or out of language, beliefs, or ideas, but in the sense of materially engaging as part of the world in giving it specific material form.”

Quantified thinking is the dominant ideology of contemporary life: not just in scientific and computational domains but in government policy, social relations and individual identity. It exists equally in qualified research and subconscious instinct, in the calculations of economic austerity and the determinacy of social media. It is the critical balance on which we have placed our ability to act in the world, while critically mistaking the basis for such actions. “More information” does not produce “more truth”, it endangers it. We cannot stand as dispassionate observers of supposedly truth-making processes while they continue to fail to produce dispassionate ends. Rather, we need to restate in new terms, in light of our technologies and in respect of them, the importance of acknowledging complexity and doubt in our thinking about the world.