As far back as antiquity philosophers have dreamed of a future where technology could ease the burden of work for mankind. In his most famous book, Politics – written 350 BC – Aristotle declared that “If every instrument could accomplish its own work . . . chief workmen would not want servants, nor masters slaves.”

While the technology that was available to Aristotle in ancient Athens seems worlds away from what Silicon Valley has on tap today, the questions about the fate of the human species remain the same: what will happen when technology becomes more advanced? Will we live in a more egalitarian society if less work needs to be done? And what role will human beings play if machines can do all the work that is needed to live comfortably?

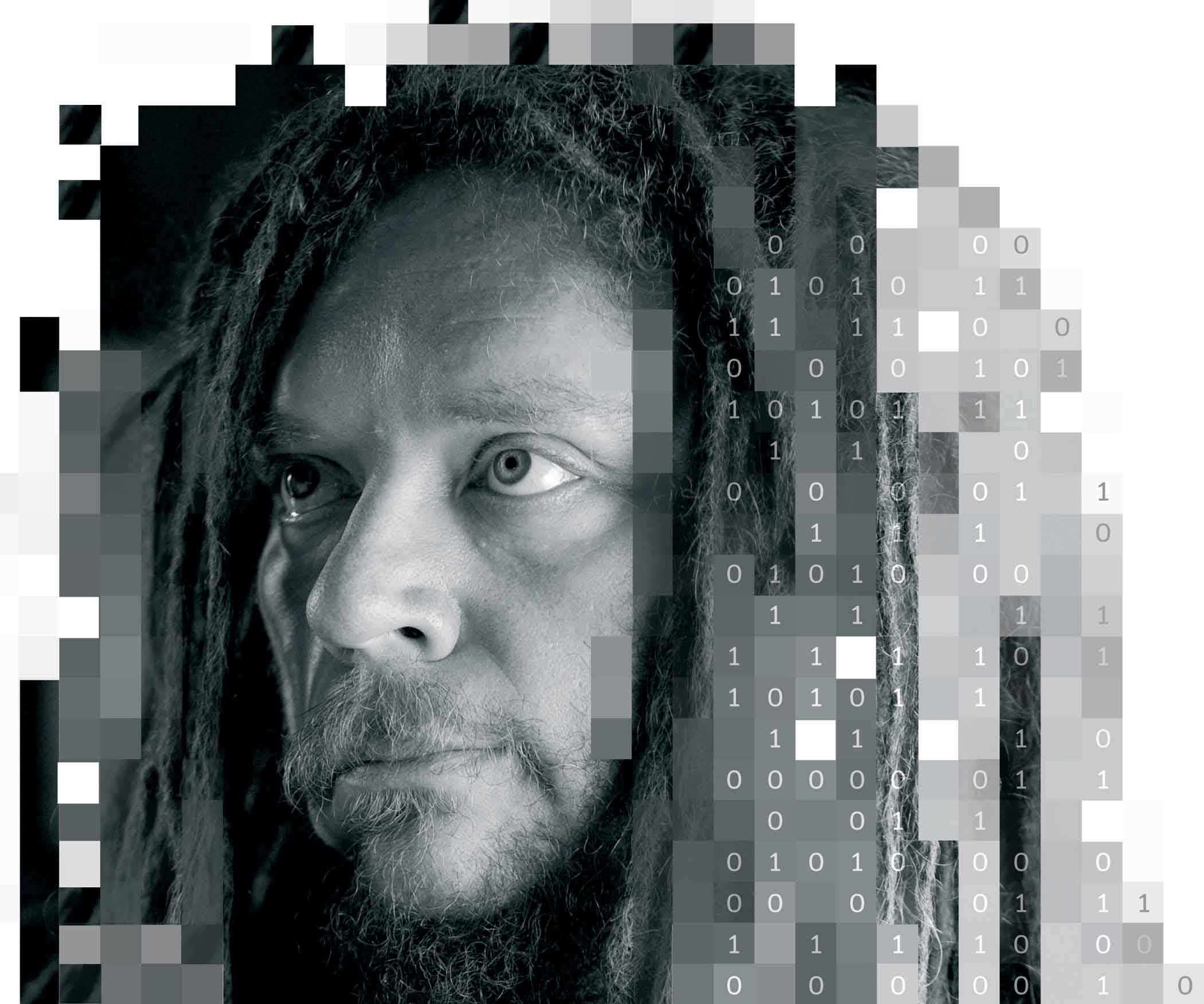

According to computer scientist and philosopher Jaron Lanier, by the time we have reached this last question, a mistake has already been made.

“These technologies don’t exist on their own: they are patterns of human behaviour. Take Google Translate for example. There is an illusion created that there is a smart algorithm that can translate from English to Spanish. But human beings are actually doing the work. There is algorithmic innovation, but they require big data.

“Whenever something requires big data, it means the algorithm isn’t really smart, and it requires information from many people. But somehow we are pretending that the people aren’t there. It’s like a giant puppet show.”

This silent charade that Lanier speaks of is the subject matter of his new book Who Owns The Future? The crux of his argument is that as technology has become more advanced, so too has our dependency on information tools.

The way to grow this knowledge-based economy, he argues – quite convincingly – is to put a price on all of our ideas.

While the information common folk continue to share for free generates massive fortunes for elite companies like Google and Facebook – basically groups of individuals with the fastest networks of computers – a large section of society will rapidly slide into poverty. These people will be what we now call the middle class.

The fear of technology creating mass unemployment is something that predates the modern world, Lanier admits.

“The idea that better technology can mean a loss of employment is a very old fear. It was initially expressed in the 19th century in [England in the Industrial Revolution] through the Luddite riots, and in the early works of Marx. The birth of science fiction with HG Wells was another expression of this. But for the most part, in the 20th century, it turned out this fear was unfounded.”

“The reason why I’m so concerned about this 19th-century fear coming back is not because of any factual circumstances. It’s because of a fiction that has been created through the rise of technology: the idea that the creation of works of information should be where monetisation stops.”

Lanier believes that as technology becomes more advanced, it’s inevitable that information is the only remaining area that individuals can contribute to in an ongoing way. I ask him to give me an example to back up this claim: “Well, once there is a form of manufacturing such as 3D printing – or other techniques that can create goods apart from a traditional factory – there are still people performing labour: in terms of designing the objects that are created, or contributing to cloud software data points.

“But they will be no longer working in a factory. So the place where the action happens is in the information sphere. If we are deciding that information is free, we are ultimately saying that advanced technology must now mean unemployment. The way to avoid this scenario is simple: decide that information should be paid for.”

If you haven’t come across Lanier’s work before, and you’ve read this far, you may be mistaking him for an out-of-touch tech-Luddite, or an orthodox Marxist, who has a personal vengeance against Silicon Valley corporations.

In reality, however, he is part of the system he criticises: a point he reiterates several times throughout our interview. He has dedicated his entire career to pushing the transformative power of modern technology to its limits. He coined the phrase “Virtual Reality”, and helped create the world’s first immersive avatars. His technological research has been linked with both UC Berkeley and Microsoft. In the past he has sold various start-up ideas to Google.

One project he worked on back in the 1990s involved making predictive models of parts of the human brain. Google bought the idea from Lanier – via the University of California – eventually incorporating parts of it into a product they are releasing this year, Google Glass: an augmented computer headset that gives humans access to computers at all times without the use of hands to input information.

If Lanier was once a willing participant in the utopian idea that technology will ultimately free the human spirit, he now feels there is danger in a society whose only goal is to build faster technology. “Some of the folks at Google, Larry Page in particular, really believe in this AI [Artificial Intelligence] idea: building this global brain.”

In his book, Lanier describes the impact that Google Books could have on the future of human information. This project, which started in 2004, aims to create the largest body of human knowledge ever available on the Internet, by scanning millions of library books and turning them into a gigantic digital publishing venture. So far it has ended up in several multi-million-dollar lawsuits, for breach of copyright, including one with the Authors Guild in the United States.

Lanier says that while we have yet to see how Google’s bookscanning enterprise will eventually play out, a machine-centric vision of the project might encourage software to see books as snippets of information rather than separate expressions of individual writers.

“If you believe in this idea then books are not [seen as] individual expressions of people, but statistical data of humanity as a whole. We can talk about this in terms of copyright, and individual rights, but I think it’s even more profound than that. It’s really about whether or not individuals even exist.

“From the point of view of consuming everyone’s data just to run some giant algorithm, well, that doesn’t even acknowledge the existence of individuals any more. Society then becomes run on the principle that people are not important, but rather part of this huge meta-organism.”

Lanier believes that if we continue on our present path, whereby we think of computers as passive tools, instead of machines that real people create, our myopia will result in less understanding of how both computers and human beings work.

“Presently we treat computers as being the organising principles that gather all this data for the biggest cloud computers so they can build bigger models. The ideology behind many of these big companies – which includes Facebook and Google – is to build this global brain that can be a successor creature to mankind. The reality is that this global brain is a nonsense concept that doesn’t exist. It’s just the activities of real people. But creating a fiction of a global brain, it turns out, is a good profit centre, because you become almost like a private spy service. You micromanage people based on your dossiers about them, and people pay you for that. We call that advertising in the case of a company like Google.”

The longer that Lanier describes the ideologies of people running Silicon Valley, the more they start to sound like an organised religion. He points out that there is still a crossover between counterculture spirituality and tech culture. This was particularly prevalent in companies like Apple, who encompassed this guru ethos from day one of their existence.

Lanier suggests this religion-like attitude pervading the majority of the world’s major IT companies may have something to do with the fact that before the computer nerds showed up in California in the late 1970s it was already a home to Tibetan temples and Hindu ashrams, and many other forms of eastern religions.

Putting one’s absolute faith in technology, he postulates, is the product of a new emerging religion: one that is expressed through an engineering culture. Considering the enormous power that companies like Google and Facebook hold over billions of human beings on the planet, should we be particularly worried?

“Yeah,” he replies. “I do think that there is a new religion that is distorting business in the world of digital networks. Since I finished writing this book, Ray Kurzweil [the avid techno-utopian] has actually joined Google. He is their head of engineering now. I think that it’s not so much a match made in heaven as a match made in the virtual world, where we are all supposed to be uploaded to when we die!”

In an interview published in the Wall Street Journal last year, Kurzweil – the futurologist who strongly advocates the idea of Artificial Intelligence – said he believes that by the year 2029, computers will be fully conscious beings that have the ability to think for themselves.

Lanier maintains this kind of talk is considered normal for companies like Google. He says his preference would be for these multi-billion-dollar digital companies to start behaving like typical corporations again, rather than disciples of a religious movement, who define their existence on eschatological-messianic visions.

“It is very strange. Imagine if there was some digital corporation saying, we are making decisions, not on the basis of a profit motive, or on the basis of our shareholders, or investors. But instead we have this religious motive, where we believe that the end of time is coming, and we are trying to prepare the entire world for that. Well, that is exactly what companies like Google are telling us, that rather than being businesses they are trying to create this global brain that will inherit the earth for people. I just feel that somebody has to point out this is very odd, and the whole idea should be treated as suspect.”

On the closing page of his book he says the future society we will live in as a species really comes down to a matter of choice: “Whatever it is people will become as technology gets very good, they will still be people if these simple qualities hold.”

I play devil’s advocate and imagine a dystopian future where mankind decides to choose technology over people. What does Lanier envision, if we collectively, or unwillingly, end up in such a predicament?

“Right now there is this intense fascination about ‘the singularity’, the moment this global brain or machine intelligence takes over. The idea is that this post-human technology we have created becomes the primary species. We become obsolete at that point, and that is the moment when history stops and starts again, on some new terms that we cannot comprehend as human beings. This is a point that is advocated by people like Kurzweil and many others. It’s an idea that is particularly popular at Google and other high-tech companies.”

If companies within Silicon Valley are expecting such an apocalyptic outcome, are plans being made to deal with such a world? What would it look like?

“In their minds humans can’t comprehend what is on the other side of it. But they are not treating this idea like a global-massive cyber attack, where everything would fall apart. Instead they are saying, ‘This is great, the new artificial intelligence is happening.’ I would say this is just some mass suicide, and a massive dereliction of duty. In order for us to have a sense of civilisation, we need to think of the consequences of what we do. But companies like Google want to abandon that. It’s as if they are proposing that the Roman Empire should fall, just out of some bizarre religious fervor, where there must be something transcendent on the other side of it. That is a terrible impulse. This technology-religion reminds me of a suicide cult, where people believe if they kill themselves they will all transcend into other people.”

As I type these words on my Apple MacBook Pro, check my Facebook page at regular intervals, and log onto Google more times than I can possibly remember in a single day, it’s sobering to think the people who engineer the tools we use to make our lives more comfortable might also be the ones who sow the seeds of our own destruction.

We’ve spoken for nearly an hour now, and Lanier looks like he’s got other plans. I leave him with one final question. The title of his new book – Who Owns The Future? – leaves an open-ended question. Is it one he has an answer to?

“A key concept for any functioning society is that people are real, and they do exist. If you don’t start from that point of view, it’s hard to imagine creating a world that serves people at all, because you are not even acknowledging them to begin with. In this high-tech world, we don’t really acknowledge the reality of people.”

My conversation with Lanier has left me with a question of my own: are we really in control of technology, or just in a position to make it progress?

Somehow this interview has brought me back to my days at secondary school in Dublin, in the mid-1990s, when we had a weekly class in very basic computer skills. My teacher’s words have still never left me: you can advance technology but you cannot control it. It’s a phrase I would like to put to Lanier now, but he has already gone back upstairs to his hotel room.

Perhaps I’ll Facebook him later about it, or Tweet him. Maybe we can Skype or do a Google Hangout. These services are used by millions every day who upload terabytes of information. All of them are free, but reading Lanier you realise they may end up costing us far more than we could ever imagine.

If we are to take back the control that technology companies have been stealthily stealing from us for decades, it’s going to take more than merely using the correct privacy settings on Facebook. That said, I’m going to check mine now and see if I can claw back some ownership of the information that makes up my life. Before it’s too late.

Who Owns The Future? by Jaron Lanier is published by Allen Lane